|

Data mapping is a painstakingly slow process. Having worked closely with customers of all sizes, we’ve gained a profound sense of empathy for the challenges involved in cataloging vendors and assembling all of the necessary information for compliance with global regulations, such as generating Records of Processing Activities (RoPA) for GDPR, and enforcing cookie consent worldwide. Read more.

0 Comments

Deep collaboration works like a flywheel that harnesses the collective energy of a data team and directs it towards new opportunities and innovation. Great achievements emerge when teams collaborate in a way that integrates and leverages their individual strengths towards a common goal. Read more... It's been a busy 3 years in which I've had 2 kids and a few job changes. I'm a little over a year into my tenure at Noteable and quite happy to be working on similar solutions to those I tried to implement at MapR. More to come soon...

Today is my last day at MapR. Very sad to go because it's the best group of folks I've had the pleasure to work with. Always amazed by the extra lengths our team would go to in order to squeeze out that extra feature or build something special for our customers and I have the utmost respect for everybody who played a part in getting things done.

But it's time to move on after three years and find something closer to home. Will miss you all until we see each other again :) In my previous blog in this series, Kubernetized Machine Learning and AI Using Kubeflow, I covered the Kubeflow project and how it integrates with and complements the MapR Data Platform.

Kubeflow is an application deployment framework and software repo for machine learning toolkits that run in Kubernetes. In Kubeflow, Kubernetes namespaces are used to provide workflow isolation and per-tenant compute allocation capabilities. When combined with the global namespace and unified security capabilities provided by MapR, Kubeflow + MapR provides a fully comprehensive, multi-tenant environment for machine learning and AI applications. Read More... In my previous blog, End-to-End Machine Learning Using Containerization, I covered the advantages of doing machine learning using microservices and how containerization can improve every step of the workflow by providing:

Read More... The Secret Behind the New AI Spring: Transfer Learning

Transfer learning has democratized artificial intelligence. A real-world example shows how. As enterprises strive to find competitive advantages, artificial intelligence stands out as a "new" technology that can bring benefits to their organization. Model building is a big part of AI, but it is a time-consuming chore, so anything an enterprise can do to make faster progress is a plus. That includes finding ways to avoid reinventing the wheel when it comes to building AI models. Read More... If you missed the live session, you can catch me and Ralf Klinkenberg -- Co-founder and chief of data science research at RapidMiner -- discussing the how's and why's of predictive maintenance below:

link... Lately, we've been talking a lot about containerization and how Kubernetes and MapR can pair up to enhance the productivity of your data science teams and increase the time to insights. In this multi-part blog series, I will start with a high-level overview of why Kubernetes and containerization are appealing for data science environments. In a later iteration, I will provide an example of a framework that enables Kubernetized data science on your MapR cluster.

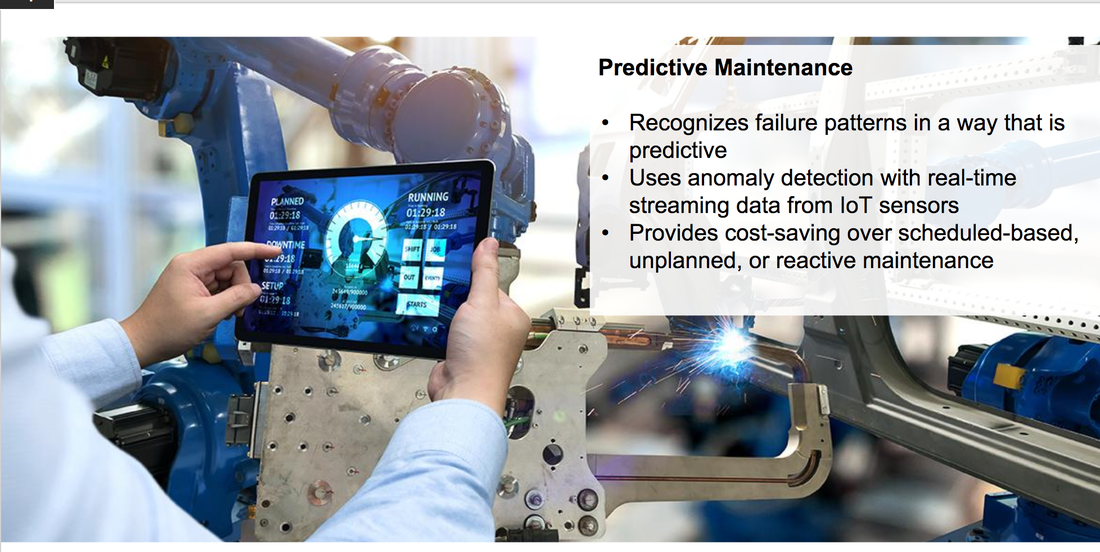

Read more... Predictive maintenance (PdM) has emerged as a primary advanced analytics use case as manufacturers have sought increased operational efficiency and productivity and as a response to technological innovations like the Internet of Things (IoT) and edge computing.

|

AuthorRachel Silver is a Principal Technical Product Manager Archives

November 2023

|

RSS Feed

RSS Feed